Most "render approval workflows" fail for a simple reason: feedback arrives as a drip. One comment at a time. One screenshot at a time. One more "small thing" after you already started correcting.

That pattern feels collaborative, but it is operationally toxic.

Drip feedback turns a clean production pass into an endless loop of re-open, re-check, re-export, and re-approve. It is the fastest way to destroy throughput.

Why drip feedback destroys throughput

Drip feedback is expensive because every new note triggers a full re-entry cost:

- Context switch cost: your team must re-open the scene, rebuild mental state, and re-locate the exact decision.

- Cascade cost: one late change often forces re-QA and re-export across multiple views or variants.

- Coordination cost: new comments arrive after other stakeholders already "approved," creating new alignment work.

- Version noise: you end up with 6 "almost final" iterations, and nobody knows what is actually approved.

The result is predictable: your catalog timeline becomes unknowable. A scalable render approval workflow does the opposite. It turns review into a bounded window and a bounded correction pass.

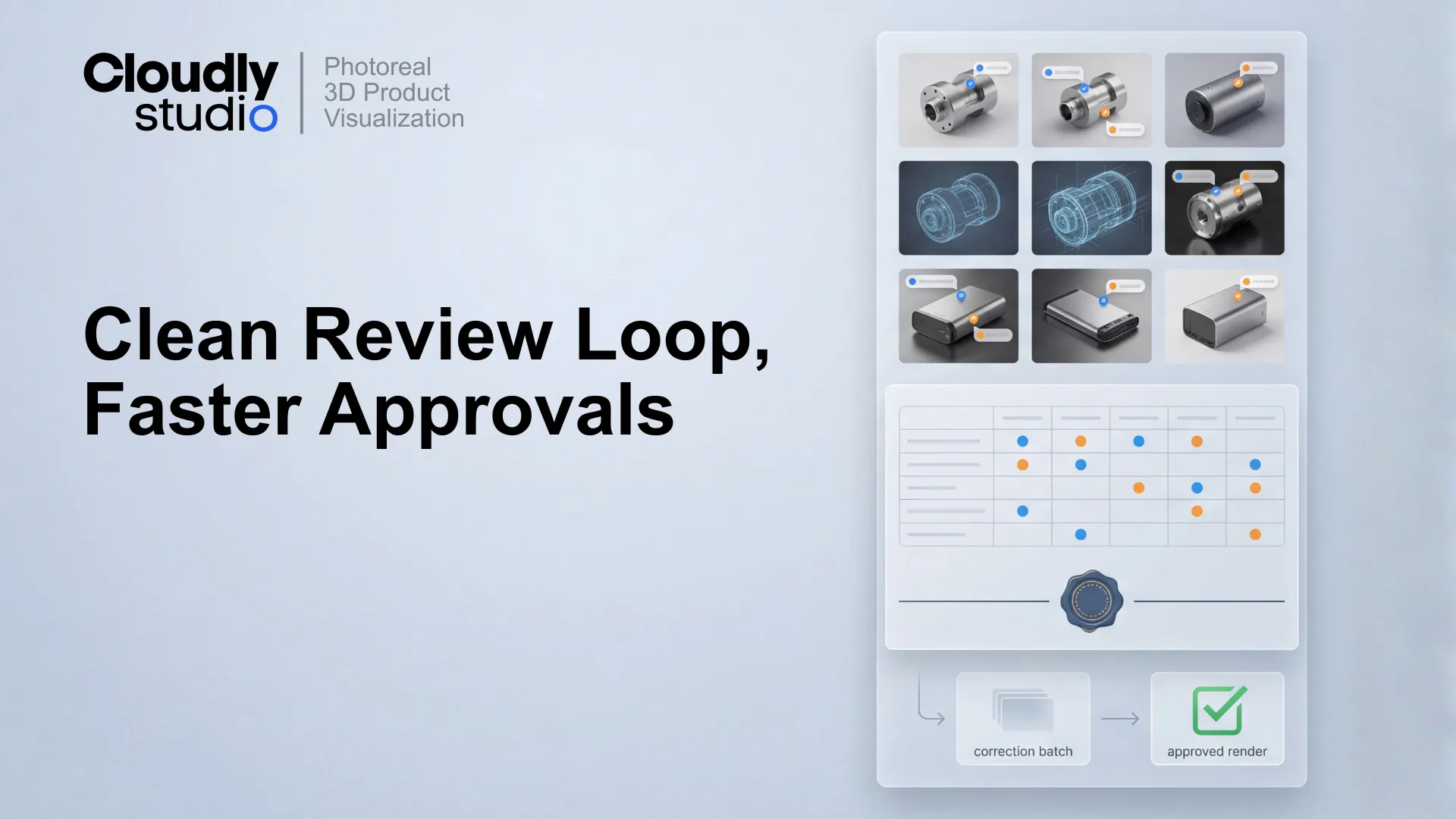

The core model: parallel checks, then one correction batch

To cut correction loops, you need two things happening at the same time:

They validate engineering intent: ports, labels, interface language, fasteners, proportions, finishes, safety marks, and any "must match" details.

They validate brand intent: lighting, shadow discipline, framing, cropping, colour management, and visual consistency across the set.

Bad workflow vs Batch workflow

Pre-submit accuracy list: prevent surprises before review

Most drip corrections are not "nice-to-have improvements." They are unresolved inputs that show up late. The fix is a Pre-submit accuracy list completed before anyone reviews pixels.

The 7-step workflow (what "one clean batch" actually looks like)

That workflow is boring on purpose. Boring is how you get predictable throughput.

What to do when stakeholders disagree

Disagreement is normal. The failure mode is letting disagreement become drip feedback. The feedback owner must resolve conflicts before batch lock. The sheet can contain "Option A vs Option B," but not "Open debate."

If a decision truly cannot be made, capture it as a single row (Decision needed, Owner, Deadline, Impact). Then lock the rest of the batch. Do not block the whole correction pass because two people disagree on a highlight intensity.

Implementation notes (so it works in the real world)

- Make the sheet the only "official" channel: People will still message. Your rule is: "Please add it as a row in the sheet."

- Time-box the review window: 24 to 48 hours is typical. More than that invites drip behaviour.

- Enforce revision IDs: Every review package must state "Reviewing: R0" and every delivery must state "Delivered: R1."

- Treat "new feedback after lock" as a different cycle: This is the emotional hard part. It is also the part that saves you.

Related deep dives

Asset Pack: The Batch Review System

You don't need more reviewers. You need a workflow that prevents unbounded corrections. Get the exact formats we use to lock production.